Class Imbalanced Learning

Meet the people working on it!

Research Overview

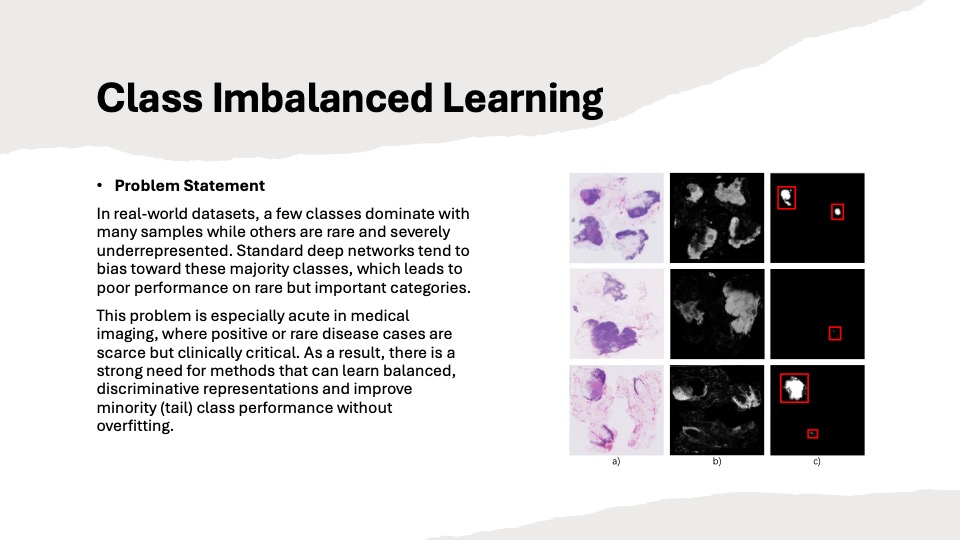

Class-imbalanced and long-tailed learning focus on scenarios where some classes have many samples while others have very few. Standard deep networks tend to overfit majority classes and under-represent rare but clinically important categories, leading to biased decision boundaries and poor minority-class performance. Our contributions span both medical and natural image domains. In medical imaging, we propose imbalance-aware methods such as a deep feature graph attention network for histopathology (ISBI 2022), which models patch–patch relationships to better capture minority patterns, and adaptive unified contrastive learning with graph-based feature aggregation (ESWA), which reweights contrastive objectives to reduce majority-class bias. In the natural image domain, we develop a decoupled optimisation framework (AAAI 2024) for long-tailed recognition that separates and optimises different parameter groups with tailored objectives, significantly improving performance across head, medium, and tail classes.

Future Direction Promising directions include extending our imbalance-aware methods to multi-modal and federated settings, integrating them with large-scale vision/foundation models, and developing automatic, data-centric strategies (e.g., adaptive augmentation or reweighting) that can handle long-tailed distributions with minimal manual tuning.