Fairness, Debiasing and Robustness

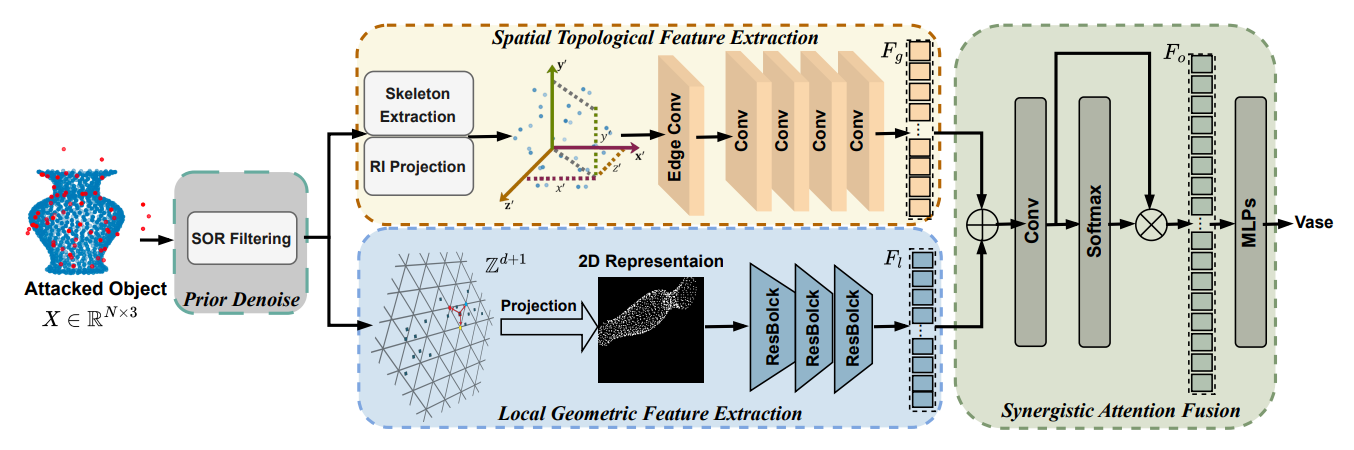

SynerGuard: A Robust Framework for Point Cloud Classification via Local Geometry and Spatial Topology | Link in here

Point cloud recognition models are known to be vulnerable to adversarial attacks. The state-of-the-art defense solutions either focus on partial features of the point cloud, limiting their effectiveness, or rely heavily on known adversarial examples, reducing their generalizability, while others, like point cloud reconstruction, will degrade the classifier's accuracy on clean examples. To address this, we introduce SynerGuard, a novel robust point cloud classification framework mitigating adversarial attacks by considering comprehensive geometric and topological attributes of the point cloud, without relying on known adversarial examples while attaining classification accuracies on clean examples. We comprehensively test SynerGuard against seven attack types from three leading adversarial attack approaches on two widely used datasets, ModelNet40 and ShapeNetPart.

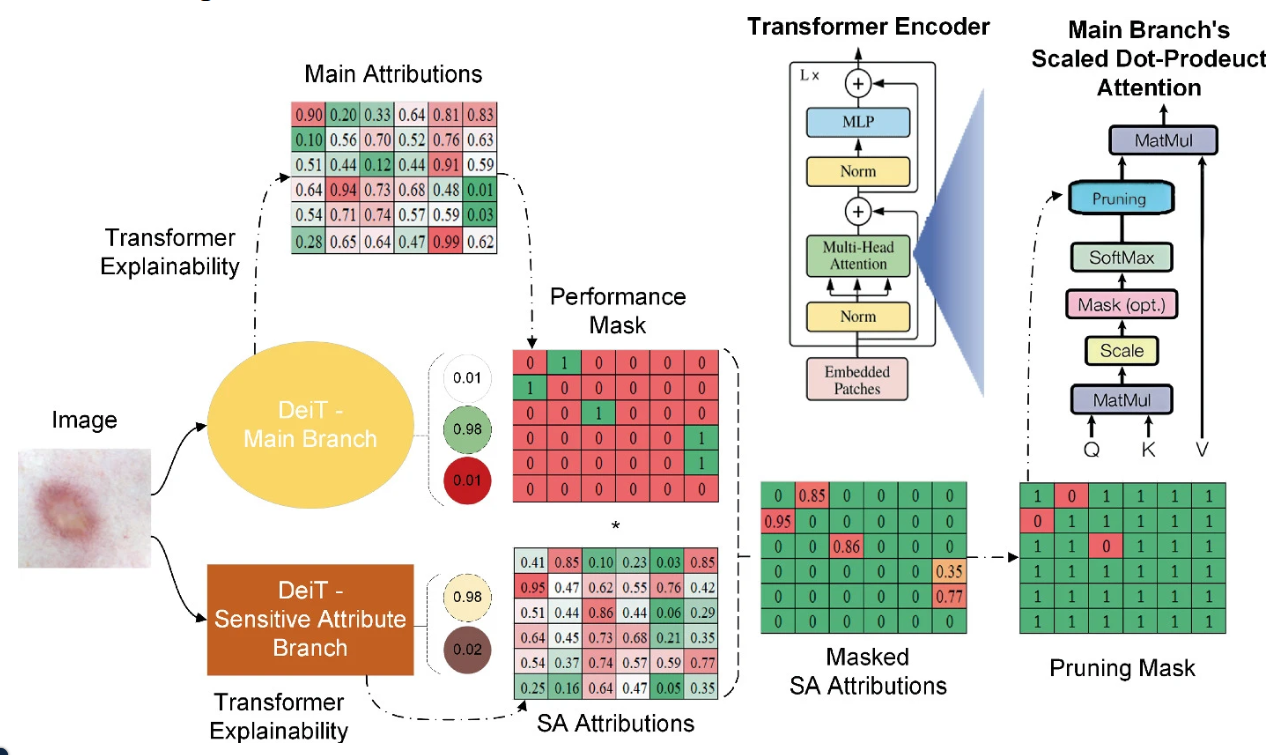

XTranPrune: eXplainability-Aware Transformer Pruning for Bias Mitigation in Dermatological Disease Classification | Link in here

Numerous studies have demonstrated the effectiveness of deep learning models in medical image analysis. However, these models often exhibit performance disparities across different demographic cohorts, undermining their trustworthiness in clinical settings. While previous efforts have focused on bias mitigation techniques for traditional encoders, the increasing use of transformers in the medical domain calls for novel fairness enhancement methods. Additionally, the efficacy of explainability methods in improving model fairness remains unexplored. To address these gaps, we introduce XTranPrune, a bias mitigation method tailored for vision transformers. Leveraging state-of-the-art explainability techniques, XTranPrune generates a pruning mask to remove discriminatory modules while preserving performance-critical ones.