Interpretability

Interpretable Image Classification via Non-parametric Part Prototype Learning | Link in here

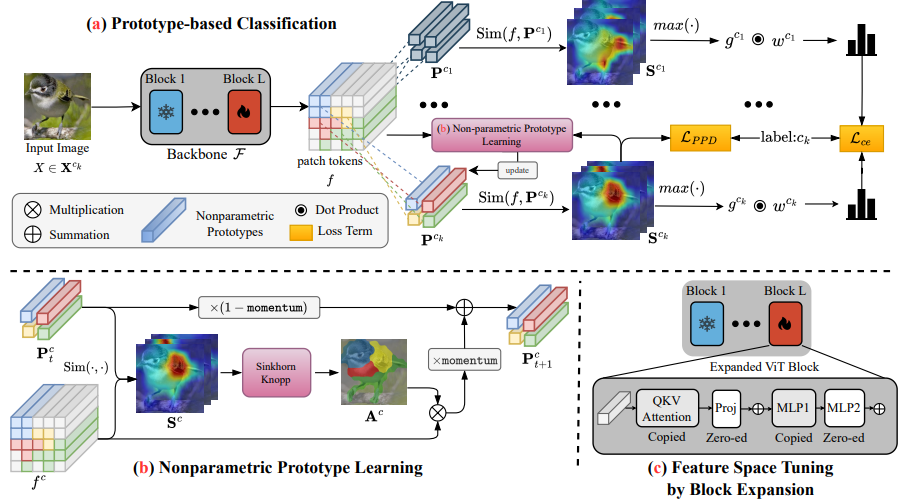

Classifying images with an interpretable decision-making process is a long-standing problem in computer vision. In recent years, Prototypical Part Networks has gained traction as an approach for self-explainable neural networks, due to their ability to mimic human visual reasoning by providing explanations based on prototypical object parts. However, the quality of the explanations generated by these methods leaves room for improvement, as the prototypes usually focus on repetitive and redundant concepts. Leveraging recent advances in prototype learning, we present a framework for part-based interpretable image classification that learns a set of semantically distinctive object parts for each class, and provides diverse and comprehensive explanations. The core of our method is to learn the part-prototypes in a non-parametric fashion, through clustering deep features extracted from foundation vision models that encode robust semantic information.

InterREC: An Interpretable Method for Referring Expression Comprehension | Link in here

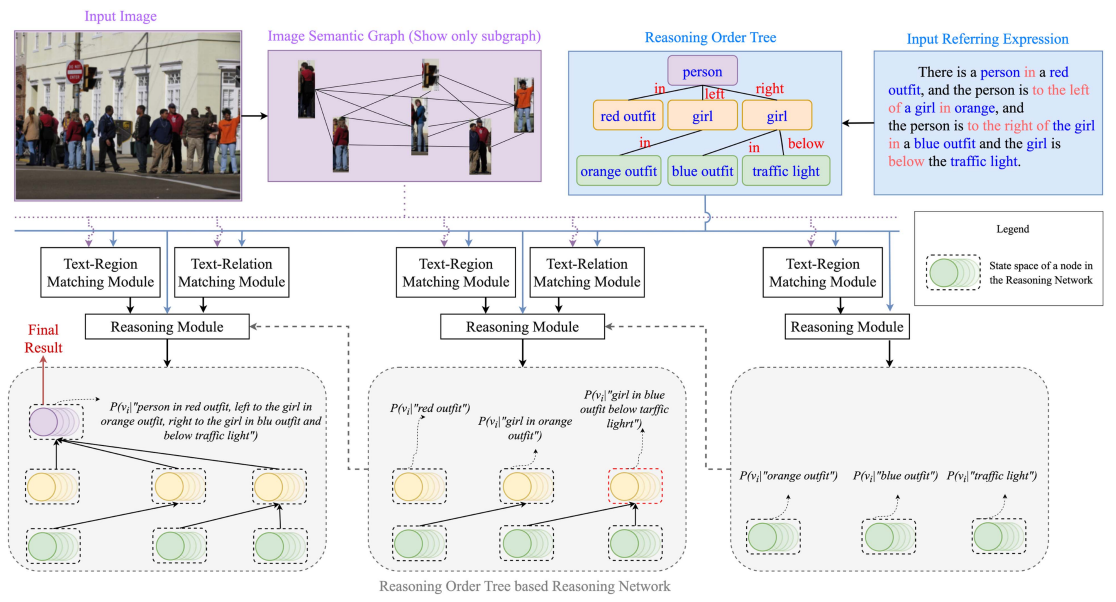

Referring Expression Comprehension (REC) aims to locate the target object in the image according to a referring expression. Most existing implicit reasoning-based REC methods lack interpretability, while explicit reasoning-based REC methods have lower accuracy. To achieve competitive accuracy while providing adequate interpretability, in this work, we propose a novel explicit reasoning-based method named InterREC. First, in order to address the challenge of multi-modal understanding, we design two neural network modules based on text-image representation learning: a Text-Region Matching Module to align objects in the image and noun phrases in the expression, and a Text-Relation Matching Module to align relations between objects in the image and relational phrases in the expression. Additionally, we design a Reasoning Order Tree for handling complex expressions, which can reduce complex expressions to multiple object-relation-object triplets and therefore identify the inference order and reduce the difficulty of reasoning. At the same time, to achieve an interpretable reasoning step, we design a Bayesian Network-based explicit reasoning method.